6 现代卷积神经网络

1 深度卷积神经网络 (AlexNet)

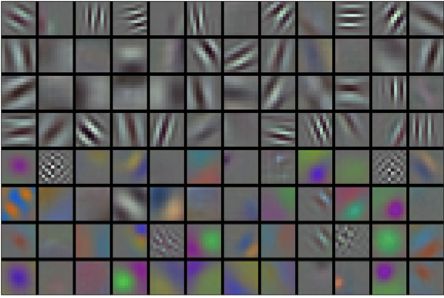

与传统机器学习相信模型的重要性不同的是, 计算机视觉领域, 人们更相信数据集的质量, 以及特征提取. 在 AlexNet 中, 首先人们来检测图片中的局部特征, 例如下图:

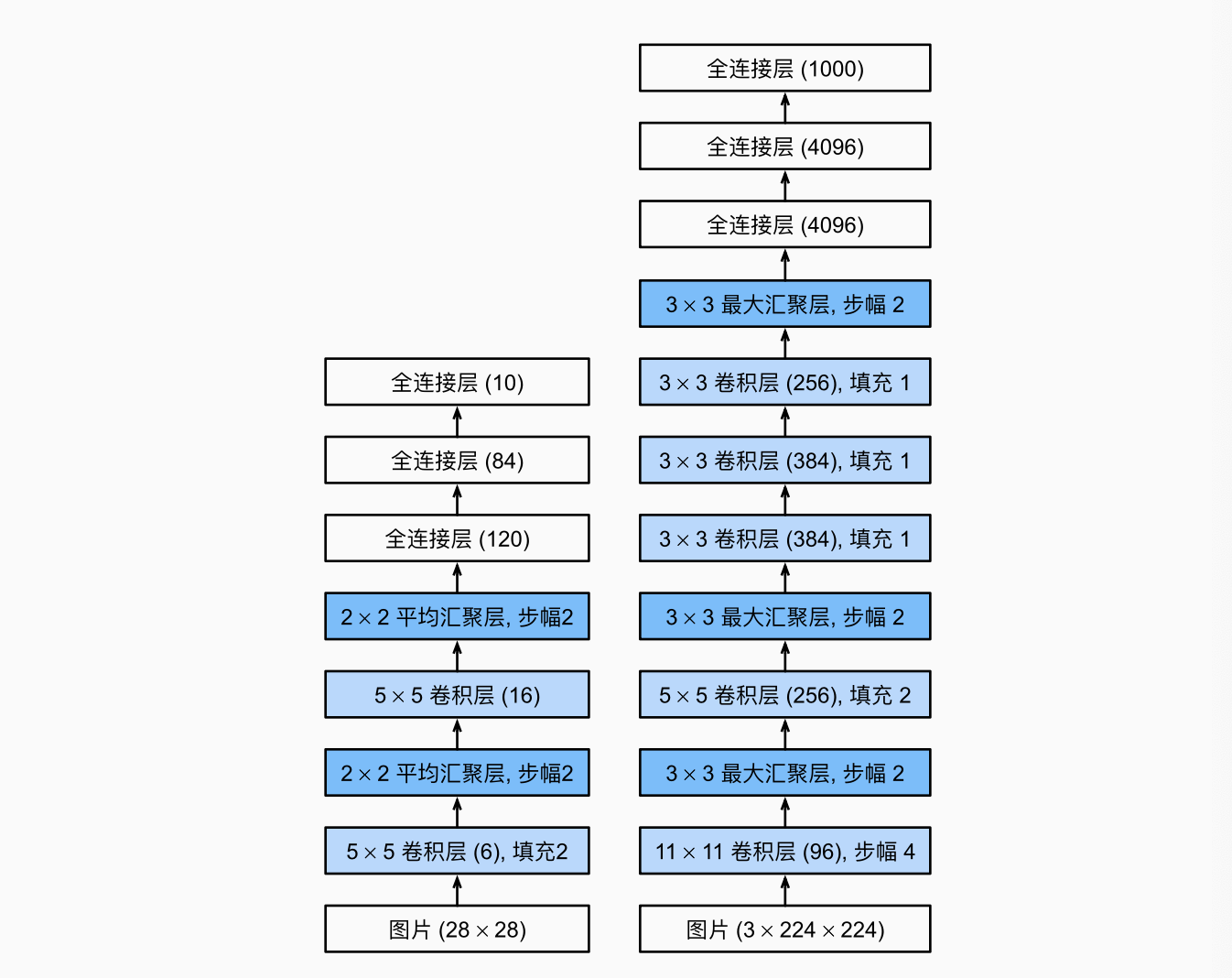

AlexNet 的架构和 LeNet 原理差不多:

为了应对更复杂的数据集, AlexNet 使用了更多的卷积层和更多的参数

| AlexNet | LeNet | |

|---|---|---|

| 数据集 | ImageNet (1000 class) | Fasion-MNIST (10 class) |

| 激活函数 | ReLU | Sigmoid |

| 容量控制 | Dropout | L2 |

| 图像增强 | 翻转、裁切、变色 | - |

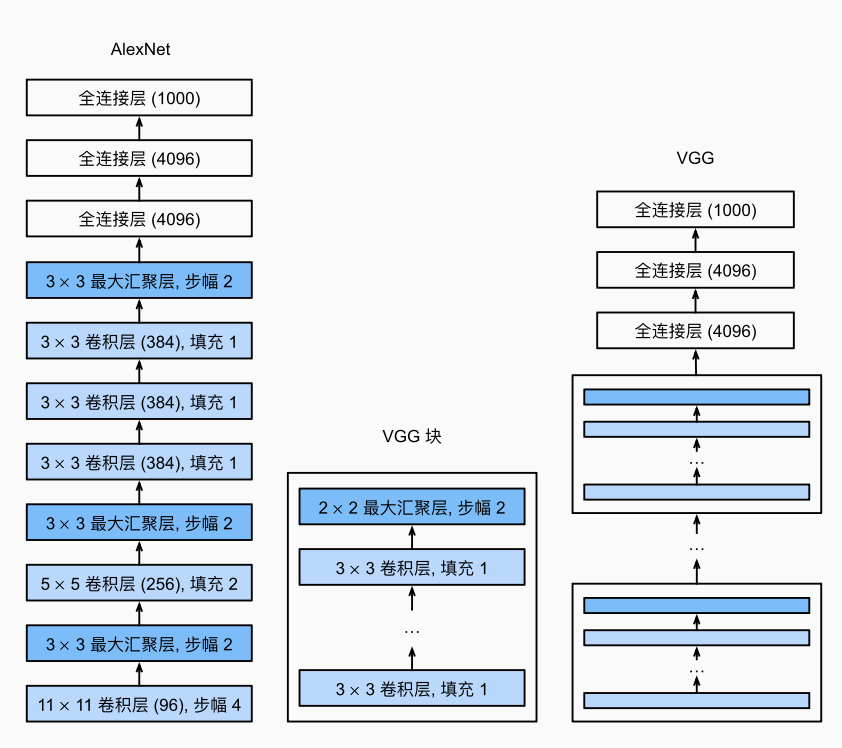

2 使用块的网络 (VGG)

经典 CNN 中, 基本组成部分是由卷积层、激活函数和汇聚层组成的序列. 一个 VGG 块 是由一系列卷积层组成、加上空间下采样的最大汇聚层:

import torch

from torch import nn

def vgg_block(num_convs, in_channels, out_channels):

"""VGG块的实现"""

layers = []

for _ in range(num_convs):

layers.append(nn.Conv2d(in_channels, out_channels,

kernel_size=3, padding=1))

layers.append(nn.ReLU())

in_channels = out_channels #只有第一层in_channel与out不同

layers.append(nn.MaxPool2d(kernel_size=2, stride=2))

return nn.Sequential(*layers)

深层且窄的卷积 (3x3) 比浅层且宽的卷积更有效!

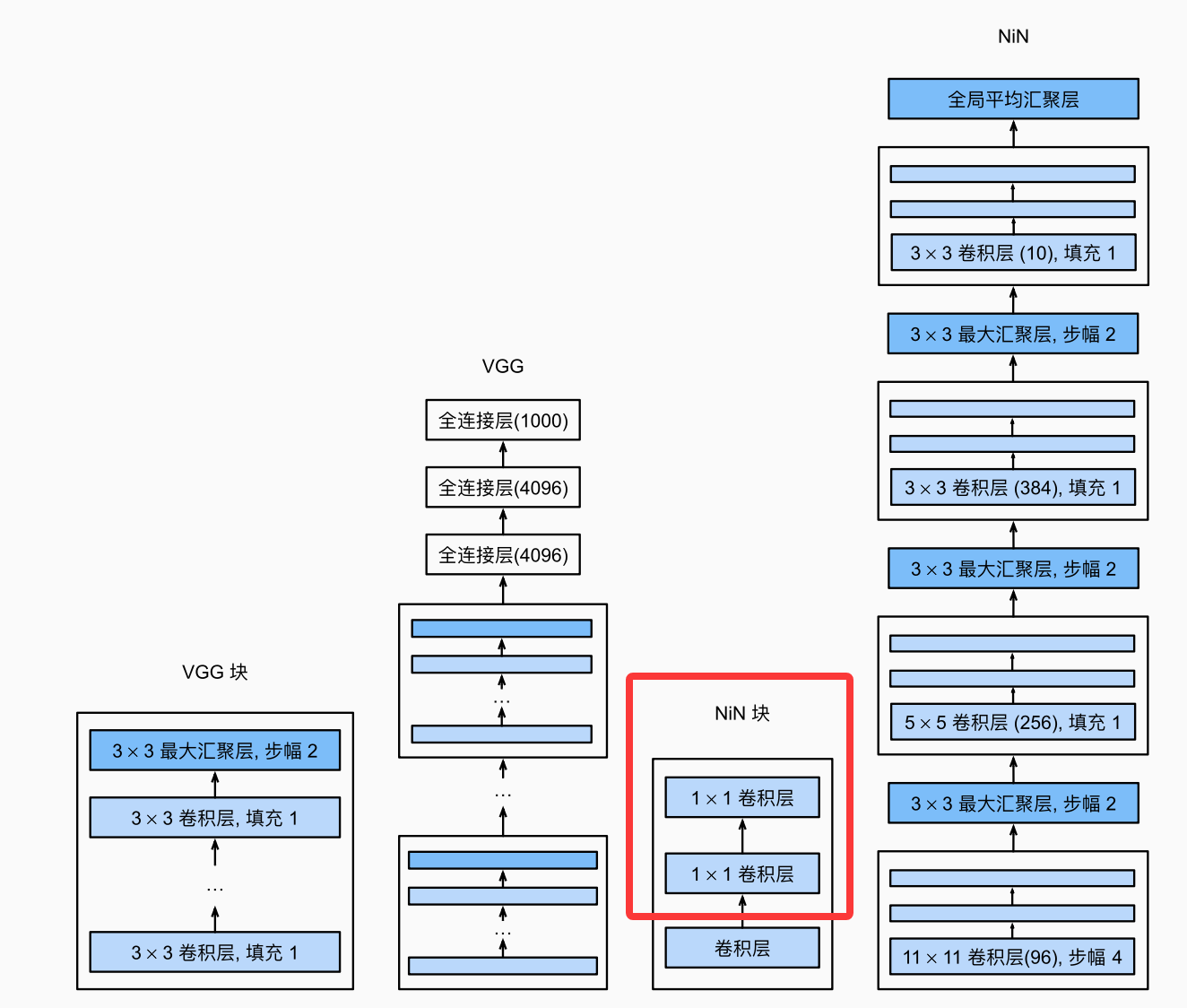

3 网络中的网络 (NiN)

NiN 会在图像的每个像素位置(也即有若干通道) 应用一个全连接层(可以认为是 1x1 的卷积层).

def nin_block(in_channels, out_channels, kernel_size, strides, padding):

return nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size, strides, padding),

nn.ReLU(),

nn.Conv2d(out_channels, out_channels, kernel_size=1), nn.ReLU(),

nn.Conv2d(out_channels, out_channels, kernel_size=1), nn.ReLU())

NiN 去除了容易过拟合的全连接层, 换成了全局平均汇聚层(在所有位置进行求和)

4 含并行连结的网络 (GoogLeNet)

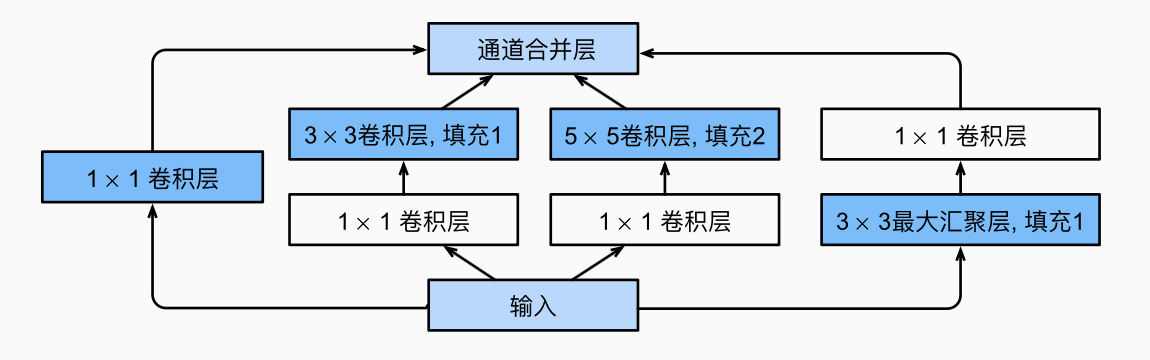

4.1 Inception 块

GoogLeNet 的基本卷积块.

from torch.nn import functional as F

class Inception(nn.Module):

def __init__(self, in_channels, c1, c2, c3, c4, **kwargs):

#c1-c4: 每条线路的输出通道

super(Inception, self).__init__(**kwargs)

self.p1_1 = nn.Conv2d(in_channels, c1, kernel_size=1)

self.p2_1 = nn.Conv2d(in_channels, c2[0], kernel_size=1)

self.p2_2 = nn.Conv2d(c2[0], c2[1], kernel_size=3, padding=1)

self.p3_1 = nn.Conv2d(in_channels, c3[0], kernel_size=1)

self.p3_2 = nn.Conv2d(c3[0], c3[1], kernel_size=5, padding=2)

self.p4_1 = nn.MaxPool2d(kernel_size=3, stride=1, padding=1)

self.p4_2 = nn.Conv2d(in_channels, c4, kernel_size=1)

def forward(self, x):

p1 = F.relu(self.p1_1(x))

p2 = F.relu(self.p2_2(F.relu(self.p2_1(x))))

p3 = F.relu(self.p3_2(F.relu(self.p3_1(x))))

p4 = F.relu(self.p4_2(self.p4_1(x)))

return torch.cat((p1, p2, p3, p4), dim=1) #在通道维度

4.2 GoogLeNet 模型

5 批量规范化 (BatchNorm)

如果我们对输入数据单个进行规范化, 那均值都是 0, 学不到任何东西. 因此需要批量规范: 设批量

形式上

5.1 批量规范化层

和传统层相比, BatchNorm 层不能忽略批量大小.

- 对于全连接层, BN 在激活、仿射变换之间.

- 对卷积层, 同样在卷积之后、激活函数之前应用, 对卷积的多个通道分别执行

和 dropout 一样, batchnorm 在训练、测试的表现不一样. 在测试时, 不需要样本均值的噪声和

5.2 从零实现

def batch_norm(X, gamma, beta, moving_mean, moving_var, eps, momentum):

if not torch.is_grad_enabled():#判断训练还是测试模式; 当前是测试模式

X_hat = (X - moving_mean) / torch.sqrt(moving_var + eps)

else:#训练模式

assert len(X.shape) in (2, 4)

if len(X.shape) == 2:#全连接层的情况

mean = X.mean(dim=0)

var = ((X - mean) ** 2).mean(dim=0)

else:#二维卷积层的情况

mean = X.mean(dim=(0,2,3), keepdim=True)

var = ((X - mean) ** 2).mean(dim=(0,2,3), keepdim=True)

X_hat = (X - mean) / torch.sqrt(var + eps)

moving_mean = momentum * moving_mean + (1.0 - momentum) * mean

moving_var = momentum * moving_var + (1.0 - momentum) * var

Y = gamma * X_hat + beta

return Y, moving_mean.data, moving_var.data

net = nn.Sequential(

nn.Conv2d(1,6, kernel_size=5), BatchNorm(6, num_dims=4), nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2),

nn.Conv2d(6, 16, kernel_size=5), BatchNorm(16, num_dims=4), nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2), nn.Flatten(),

nn.Linear(16*4*4, 120), BatchNorm(120, num_dims=2), nn.Sigmoid(),

nn.Linear(120, 84), BatchNorm(84, num_dims=2), nn.Sigmoid(),

nn.Linear(84, 10))

)

5.3 简明实现

可以直接用 nn.BatchNorm2d:

net = nn.Sequential(

nn.Conv2d(1, 6, kernel_size=5), nn.BatchNorm2d(6), nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2),

nn.Conv2d(6, 16, kernel_size=5), nn.BatchNorm2d(16), nn.Sigmoid(),

nn.AvgPool2d(kernel_size=2, stride=2), nn.Flatten(),

nn.Linear(256, 120), nn.BatchNorm1d(120), nn.Sigmoid(),

nn.Linear(120, 84), nn.BatchNorm1d(84), nn.Sigmoid(),

nn.Linear(84, 10))

6 残差网络 (ResNet)

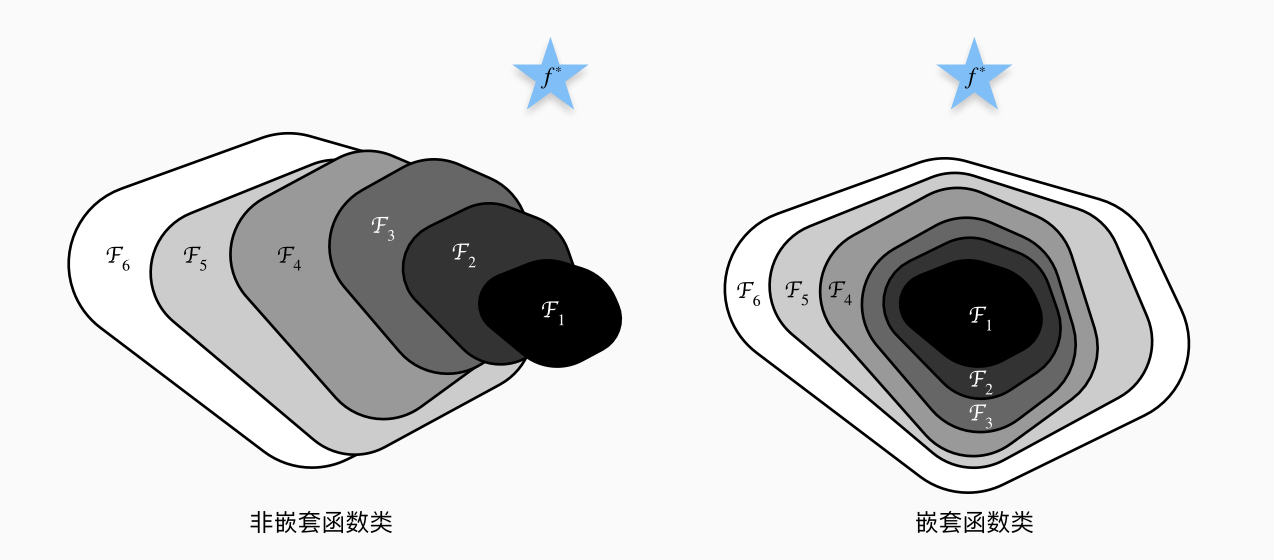

6.1 函数类

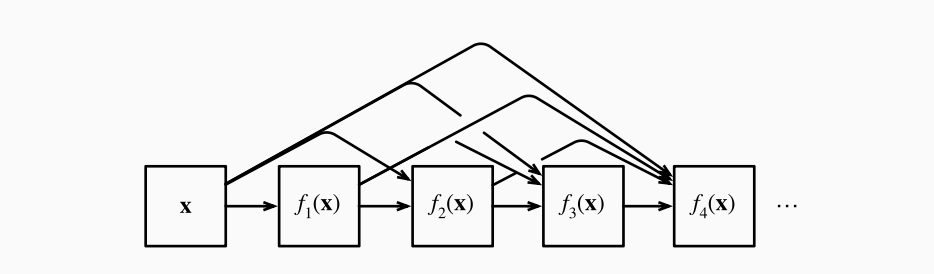

假设一类特定的神经网络架构

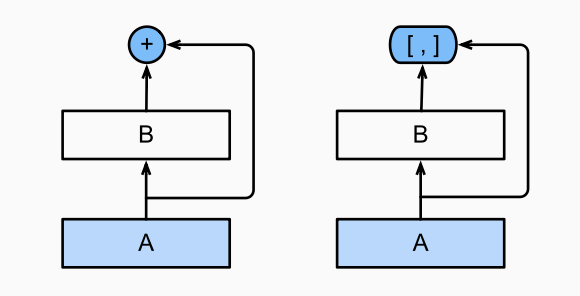

为了让

从图中看, 我们需要保证嵌套函数类. 这样, 对于新的架构, 至少我们有恒等映射

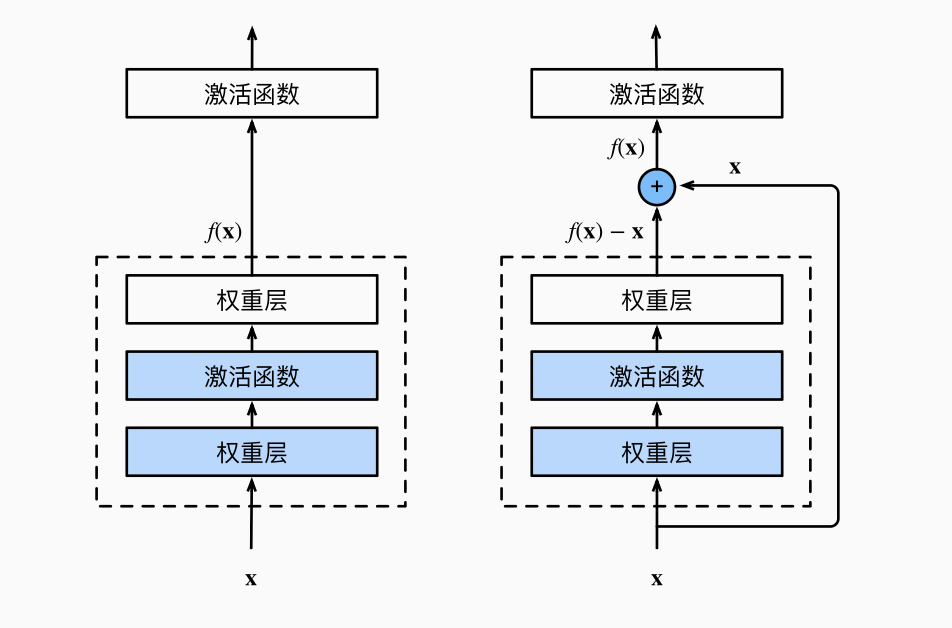

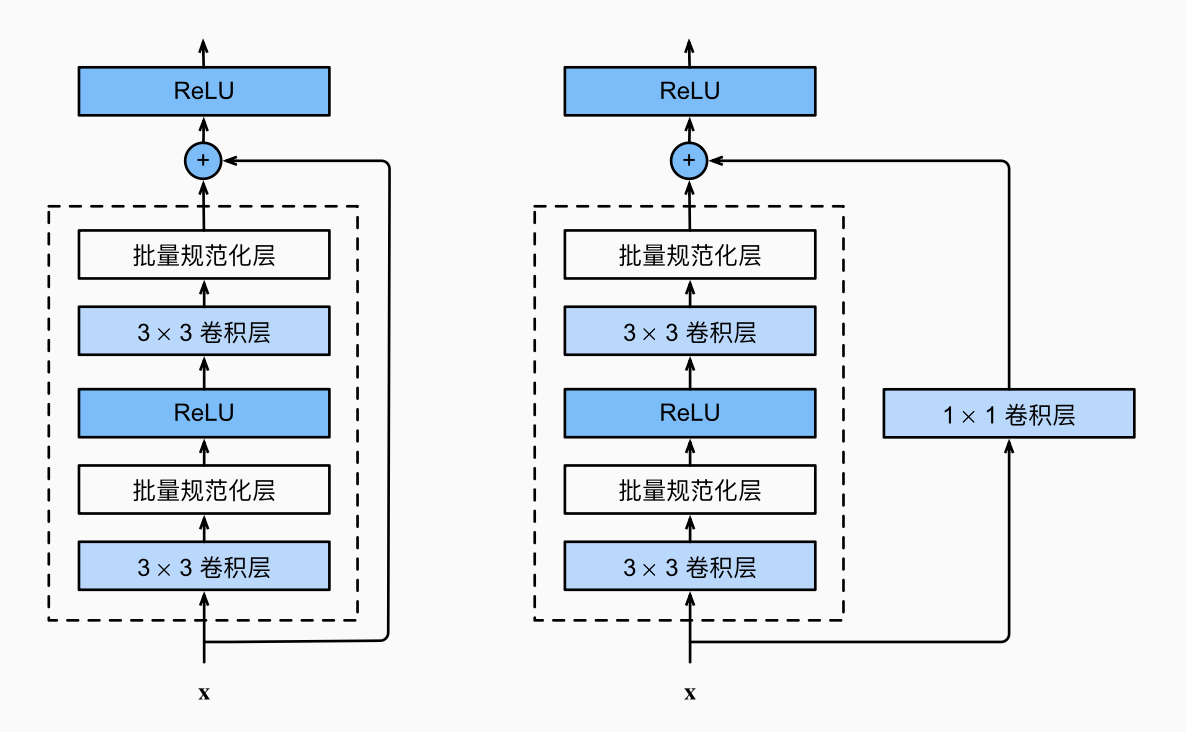

6.2 残差块

如图, 传统结构中, 我们希望通过这个盒子, 将

ResNet 沿用了 VGG 的 3x3 卷积层设计. 1x1 卷积层用来改变通道数, 将输入变换成需要的形状然后相加.

class Residual(nn.Module):

def __init__(self, input_channels, num_channels,

use_1x1conv=False, strides=1):

super().__init__()

self.conv1 = nn.Conv2d(input_channels, num_channels,

kernel_size=3, padding=1, stride=strides)

self.conv2 = nn.Conv2d(num_channels, num_channels,

kernel_size=3, padding=1)

if use_1x1conv:

self.conv3 = nn.Conv2d(input_channels, num_channels,

kernel_size=1, stride=strides)

else:

self.conv3 = None

self.bn1 = nn.BatchNorm2d(num_channels)

self.bn2 = nn.BatchNorm2d(num_channels)

def forward(self, X):

Y = F.relu(self.bn1(self.conv1(X)))

Y = self.bn2(self.conv2(Y))

if self.conv3:

X = self.conv3(X)

Y += X

return F.relu(Y)

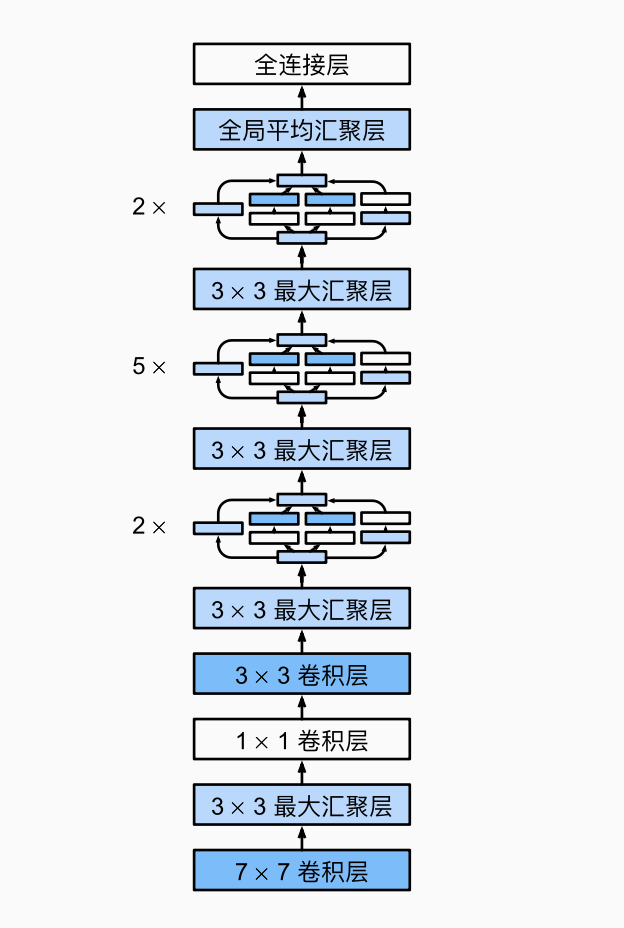

各自的网络结构如图:

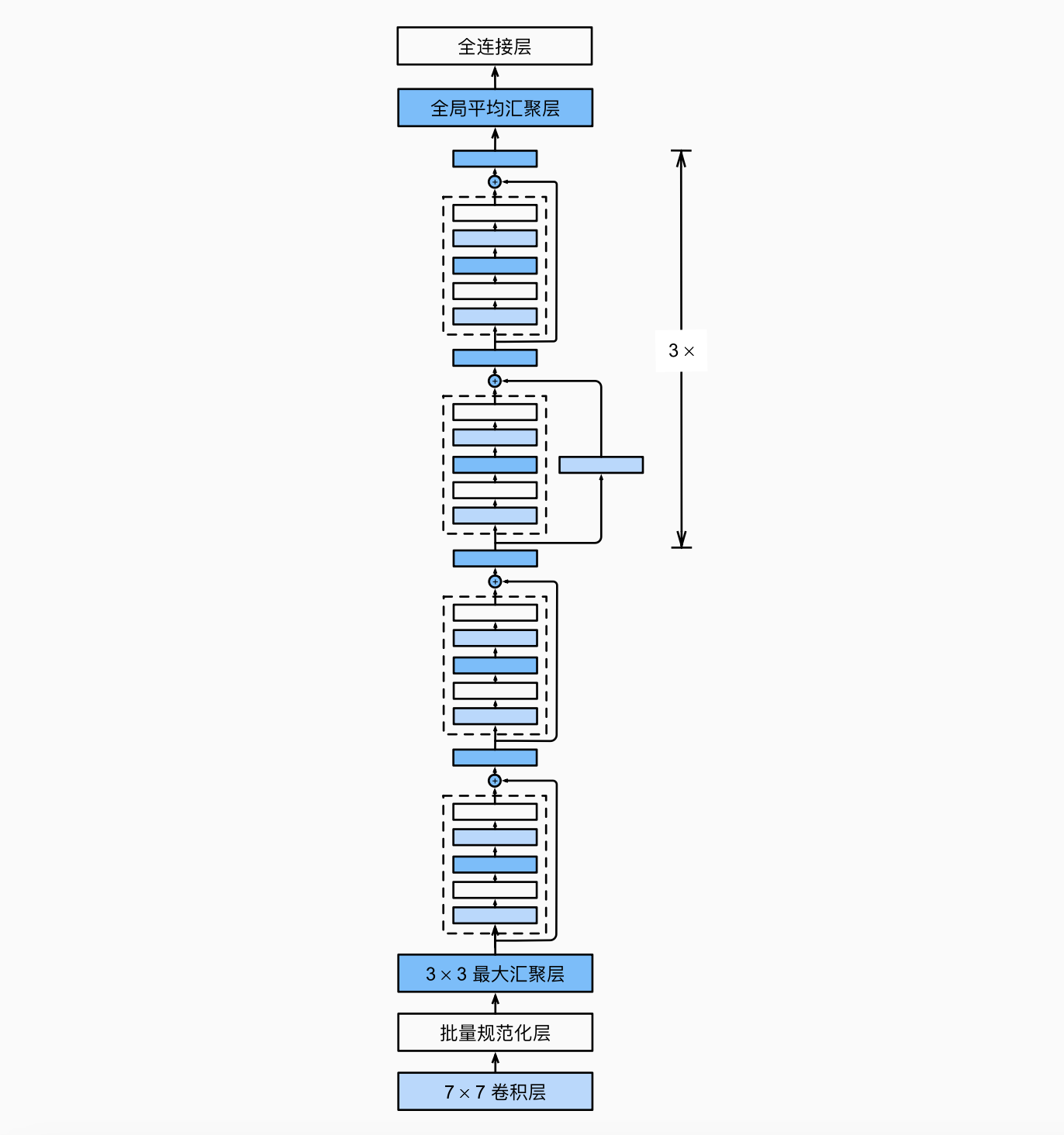

6.3 ResNet 模型

与 GoogLeNet 类似, 将 inception 块变成了残差块.

7 稠密连接网络 (DenseNet)

ResNet 将函数进行拆分

7.1 稠密块

这里用了改良版的"BatchNorm, 激活, 卷积":

def conv_block(input_channels, num_channels):

return nn.Sequential(

nn.BatchNorm2d(input_channels), nn.ReLU(),

nn.Conv2d(input_channels, num_channels, kernel_size=3, padding=1))

class DenseBlock(nn.Module):

def __init__(self, num_convs, input_channels, num_channels):

super(DenseBlock, self).__init__()

layer = []

for i in range(num_convs):

layer.append(conv_block(

num_channels * i + input_channels, num_channels))

self.net = nn.Sequential(*layer)

def forward(self, X):

for blk in self.net:

Y = blk(X)

#连接通道维度上每个块的输入和输出

X = torch.cat((X, Y), dim=1)

return X

7.2 过渡层

稠密块会增加通道数, 过渡层则控制模型复杂度(通过 1x1 的卷积层来减小通道数), 再用汇聚层减半宽高.

def transition_block(input_channels, num_channels):

return nn.Sequential(

nn.BatchNorm2d(input_channels), nn.ReLU(),

nn.Conv2d(input_channels, num_channels, kernel_size=1),

nn.AvgPool2d(kernel_size=2, stride=2))

7.3 DenseNet 模型

将 ResNet 的四个残差块替换为四个稠密块

b1 = nn.Sequential(

nn.Conv2d(1, 64, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(64), nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1))

#num_channels为当前的通道数

num_channels, growth_rate = 64, 32

num_convs_in_dense_blocks = [4, 4, 4, 4]

blks = []

for i, num_convs in enumerate(num_convs_in_dense_blocks):

blks.append(DenseBlock(num_convs, num_channels, growth_rate))

#上一个稠密块的输出通道数

num_channels += num_convs * growth_rate

#在稠密块之间添加一个转换层,使通道数量减半

if i != len(num_convs_in_dense_blocks) - 1:

blks.append(transition_block(num_channels, num_channels // 2))

num_channels = num_channels // 2